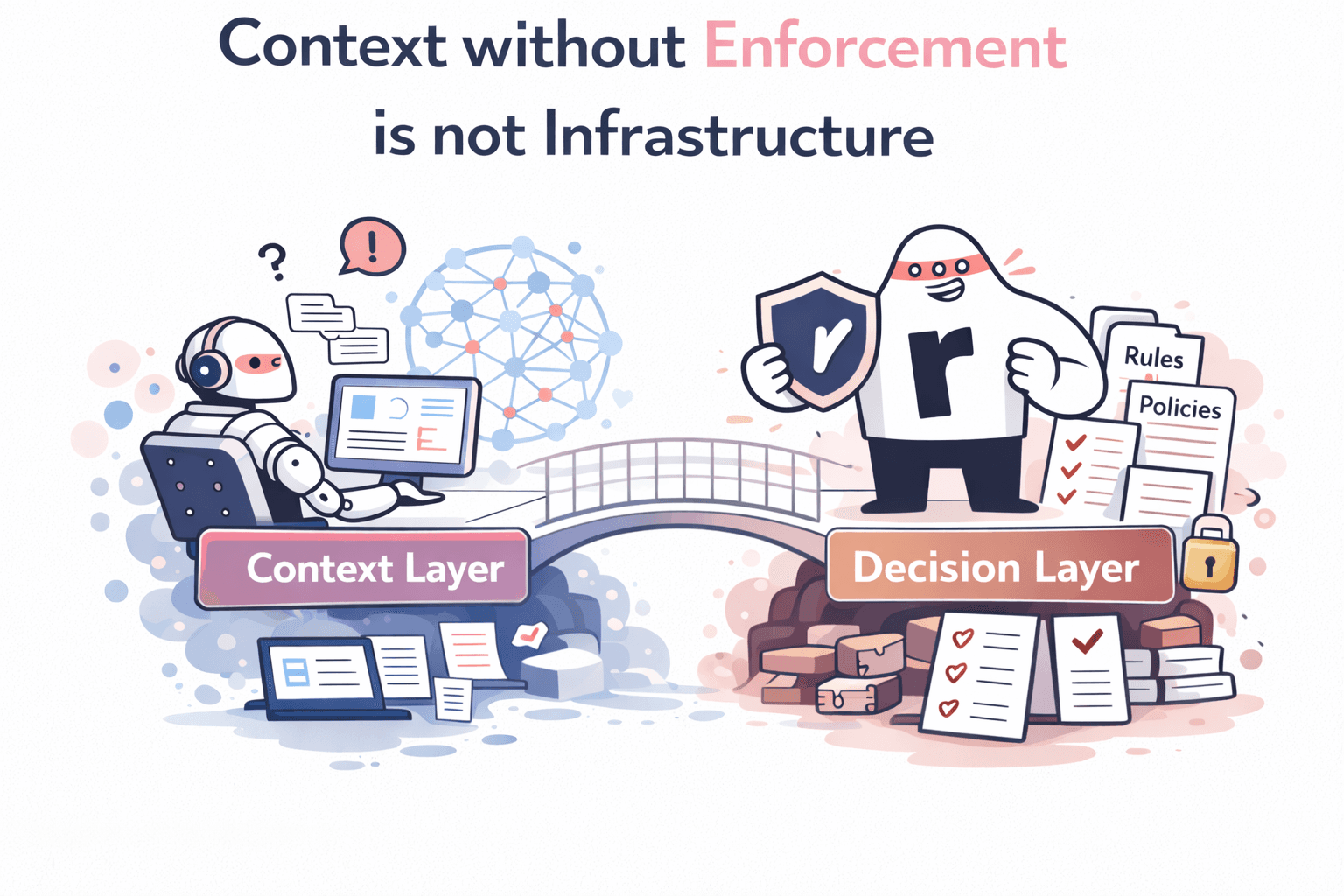

Context without enforcement is not infrastructure

On March 10, Jason Cui and Jennifer Li published a piece on the a16z blog titled "Your Data Agents Need Context" It is one of the clearest diagnoses of enterprise AI failure I have read from a major investor. The thesis is direct: AI agents deployed in enterprise fail not because the models are weak, but because the models are blind. They lack the business context that any competent human would carry into a conversation, the definitions, the exceptions, the tribal knowledge accumulated over years inside an organization.

The framework they propose is structured and credible: make data accessible, build context automatically via LLM, refine it with human expertise, expose it via API or MCP, and maintain it continuously. They cite a 2025 MIT report confirming that the majority of enterprise AI deployments fail due to fragile workflows and insufficient contextual learning.

I agree with all of it.

And I want to explain why the thesis, precisely because it is right, leads to a conclusion that the article stops just short of reaching.

Context layers are necessary. They are not sufficient. And the gap between necessary and sufficient is where most enterprise AI deployments will break in 2026.

The right diagnosis, applied to the wrong failure mode

The a16z article frames the problem around agents that read data and produce answers. An agent asked to analyze revenue growth that doesn't know which table, which definition, which fiscal quarter, which geographic perimeter. A semantically fluent agent that is organizationally illiterate.

This failure mode is real. We encounter versions of it regularly in enterprise deployments. But it describes a world where the worst thing an agent can do is produce an incorrect answer.

That world is rapidly becoming a smaller fraction of what enterprise agents actually do.

In 2026, the agents being deployed in production are not primarily reading agents. They are acting agents. They approve credit applications. They trigger procurement workflows. They initiate refunds. They send compliance-sensitive communications on behalf of the organization. They modify records in systems of record. They make decisions that propagate downstream through business processes before anyone has reviewed them.

For reading agents, the failure mode is: wrong answer. Embarrassing, sometimes costly, correctable.

For acting agents, the failure mode is: unauthorized action. Potentially irreversible. Operationally disruptive. In regulated industries, legally exposed.

A bad answer is an error. A bad action is an incident.

This distinction is not semantic. It defines what kind of infrastructure you need.

What "context" means when your agent executes

Consider a concrete deployment. A credit agent operating inside an automotive dealership network processes financing applications. The model is capable, trained on thousands of cases, calibrated for risk scoring, able to reason about solvency and repayment capacity. It has, in the a16z sense, good context: it understands what a financing application looks like, what the approval criteria are in general terms, what the likely outcome of a given dossier should be.

But the actual decision in production depends on things that are not in the model and cannot be fully captured in a prompt:

- Does the customer's file include the specific regulatory documentation required by jurisdiction?

- Does the application exceed the threshold above which mandatory human review is required?

- Does the dealership profile carry specific contractual conditions that modify the standard approval logic?

- Has there been a recent policy revision, issued two weeks ago by the compliance team in an internal email, that changes the maximum auto-approval ceiling for this product type?

None of these are data questions in the a16z sense. They are not about which table holds the revenue definition or what fiscal quarter the analysis covers. They are authority questions. They define what the agent is permitted to do, not what it knows.

Context graphs storing decisions to guide the agent's reasoning is part of the answer but it remains a suggestion. For the model to apply them deterministically you need more than that.

An agent with perfect context, in the a16z sense, that lacks authority enforcement will approve a 5,000-euro application when the auto-approval ceiling is 2,000 euros. Not because it hallucinated. Not because its reasoning was flawed. Because it had no mechanism to check its proposed action against the operative rules before executing it.

This is the failure mode that a context layer alone cannot prevent.

The architectural gap: between intent and execution

The a16z framework is a pipeline for enriching what an agent knows before it acts. That is genuinely valuable. But enterprise-grade reliability requires a second layer that is structurally different in purpose: it does not inform the agent, it governs it.

At Rippletide, we call this the Decision Context Graph. It is a hypergraph database that encodes not the semantic context of the organization's data, but the authority structure of the organization's decisions: rules, policies, pre-conditions, approval thresholds, compliance constraints, escalation triggers, and the causal relationships between them.

When an agent proposes an action, the decision context graph intercepts it before execution. It evaluates the proposed action deterministically, not probabilistically, against every applicable rule and constraint. The result is a verified decision: approved, blocked, or routed to human review. Every outcome is logged with a complete causal trace: which data, which rule, which outcome, why.

This is what we mean when we say: "The LLM proposes. Rippletide decides."

The LLM is not the problem. LLMs are remarkably capable at reasoning and planning. The problem is that LLMs operate on probabilities, i.e they output the most plausible. They generate the most likely correct action given their context. But "most likely correct" is not the standard that enterprise compliance teams, audit functions, or regulatory bodies accept. They require provably correct: the action was validated against the operative rules before it was executed, and there is a verifiable record to demonstrate it.

A probabilistic reasoning engine cannot provide a deterministic guarantee. That guarantee requires a different kind of infrastructure. Evaluation, enforcement and authority.

Three questions your current architecture cannot answer

Here is a practical test for any enterprise team currently deploying or evaluating AI agents in production.

Question 1: Your agent made a decision yesterday. Can you reproduce it?

Not explain it. Not reconstruct it approximately. Reproduce it, with the same inputs, the same state, the same rules, arriving at the same output, verifiably. In the overwhelming majority of current enterprise deployments, the answer is no. LLM-based decisions are non-deterministic by design. The same prompt, the same model, the same data can produce different outputs on different runs. This is not a bug. It is how the technology works. But it means that reproducing a specific past decision, which any audit requires, is not possible with an LLM alone.

Question 2: How does your agent know what it is authorized to do?

This information lives where, exactly? In the system prompt? In a policy document retrieved via RAG? If your agent's authority boundaries live in a prompt, they are not governed. They are assumed. A system prompt updated six months ago, before a policy revision, before a regulatory change, before an acquisition that modified the approval structure, is a liability, not a control. Rules that must remain stable and enforceable across time, across agents, across teams, require a structured layer that is versioned, auditable, and enforced, not hoped.

Question 3: Who is accountable when your agent acts incorrectly in production?

This question becomes acutely uncomfortable when the agent acted without leaving a trace that allows anyone to reconstruct why. The absence of a decision trace is not merely a technical inconvenience. In regulated industries, it is a compliance failure. In any industry, it is a governance gap that will eventually produce an incident.

These three questions do not have good answers in an architecture that relies on a context layer alone. They require a decision layer: a deterministic enforcement engine that sits between the agent's intent and the world, validates every proposed action before execution, and writes a complete causal trace of every decision.

The a16z argument for external infrastructure applies here too

The a16z article includes a sentence that deserves more emphasis than its placement in the piece suggests: "Realistically, not every enterprise can, or should, build this in house, and we're starting to see various solutions coming to market."

They say this about the context layer. The argument applies with even more force to the decision layer.

Building a context layer in-house is a substantial but bounded engineering project: extract metadata, build ontologies, wire up an MCP server, maintain the pipeline. Difficult. Doable.

Building a decision enforcement layer in-house is a different order of problem. The rules change. Policies are revised. Approval thresholds are updated. New compliance requirements are introduced. New agent types are deployed. The enforcement layer must be versioned, maintained, integrated across every agent in the fleet, and kept consistent with the operative rules of the organization at every moment.

This is not a project. It is a system. And it is the kind of system that, built ad hoc in-house, becomes a liability: underdocumented, underresourced, lagging behind the rule changes it is supposed to enforce.

The same economic argument that justifies buying a context layer from a specialist applies to the decision layer. The organization's core competency is not building and maintaining decision enforcement infrastructure. It is running its business with reliable agents.

What we have learned from production deployments

In February 2026, we published an account of the Neo4j Context Graph Meetup in San Francisco, where Yann Bilien presented our work on automatic ontologies for the Decision Context Graph. One of the core insights from that session, which resonated across the engineering teams present, is that contradictions in authority structures must be resolved at build time, not inference time. If two sources of organizational policy disagree on an approval threshold, that conflict must be identified and resolved during graph construction. It cannot be left for the language model to arbitrate at runtime, because the language model will not consistently arbitrate it the same way twice.

This is a precise articulation of why context layers and decision layers are complementary rather than substitutable. A context layer enriches the semantic understanding available to the agent at inference time. A decision layer eliminates the ambiguities that cannot be safely left to inference time at all.

We have seen this play out in our two deepest production deployments.

At a major spirits industry player, a customer service agent deployed on the Decision Context Graph moved from 85% ticket resolution accuracy to 96%. The gain was not primarily from better LLM reasoning. It was from structuring the authority logic, which claims the agent could make, which cases required escalation, which responses were permissible under which conditions, in the graph rather than in the prompt. The agent became more accurate because it became more constrained. Constraints, properly structured, are not limitations. They are the mechanism by which an agent's outputs become reliable enough to trust.

At a major automotive manufacturer, a credit agent deployed for dealership financing operates under strict compliance constraints that vary by jurisdiction, product type, and dealership profile. The Decision Context Graph enforces these constraints before any approval is executed. Every decision is traceable. The compliance team has audit-ready logs before they ask for them. The operations team has an agent that scales without introducing regulatory exposure.

In both cases, the core value was not that the agents became smarter. It was that they became accountable.

The five-step framework, extended

The a16z framework is a solid foundation for context infrastructure. I want to propose an extension, not a replacement, that accounts for the decision layer.

Step 1: Make your data accessible. Agreed. Data that cannot be reached cannot inform decisions.

Step 2: Build context automatically via LLM. Agreed. LLMs are efficient at extracting semantic structure from unstructured organizational content.

Step 3: Refine with human expertise. Agreed, and critical. This is where the implicit rules, conditional exceptions, and historically contingent knowledge get captured. As the a16z article correctly observes, this step cannot be fully automated. The most important context is the kind that no one has ever written down.

Step 4: Expose via API or MCP. Agreed. Context that cannot be queried by agents at runtime has no operational value.

Step 5: Maintain continuously. Agreed. Context decays. The organizational knowledge graph from six months ago is not the same as today's.

Now, the extension:

Step 6: Structure authority rules in a Decision Context Graph. Alongside the semantic context layer, encode the authority structure of the organization: policies as executable constraints, approval thresholds as versioned parameters, compliance rules as deterministic checks. This layer does not operate probabilistically. It does not rely on the LLM to remember its rules. It enforces them before any action reaches production systems.

Step 7: Enforce pre-execution. Every agent action is intercepted by the decision layer before it executes. The agent either has the authority and the verified data to act, or it does not. No gray zones. No heuristic assessments by the language model about whether a proposed action is probably within bounds.

Step 8: Write the decision trace. Every decision, approved, blocked, or escalated, is logged with a complete causal record: which data point was consulted, which rule applied, which outcome resulted, and why. This is not optional. It is the mechanism that makes enterprise agents auditable, regulatorily defensible, and operationally trustworthy.

The infrastructure pattern the industry is converging on

The Neo4j Context Graph Meetup in early March, attended by teams from Contextually, Indykite, Rippletide, and others, produced a convergent architectural picture that deserves to be stated explicitly.

Models generate responses and plan actions. Agent frameworks coordinate multi-step workflows. A structured memory layer, the context layer, maintains entity relationships, semantic definitions, and organizational knowledge. A governance and execution layer ensures safety and reproducibility before actions reach production systems.

These are four distinct layers. They are not alternatives. They are composable infrastructure components that solve different problems.

The industry is arriving at this architecture independently, not because any single vendor proposed it, but because the failure modes of LLM-only agents in production keep pointing back to the same missing layer. You can improve the model. You can improve the retrieval. You can enrich the context. And you will still get governance failures if there is no deterministic enforcement between intent and action.

Gartner projected in early 2026 that by 2030, 50% of AI agent deployment failures will be due to insufficient governance. This is not a prediction about model capability. It is a prediction about infrastructure maturity. The models will continue to improve. The organizations that ship reliable, trustworthy agents in production will be the ones that built the governance layer before the incident made it mandatory.

The multi-agent problem: why governance must be shared, not siloed

There is one dimension of this problem that neither the a16z article nor most current conversations about agent governance address adequately: multi-agent architectures.

A single agent with a well-structured decision layer is a tractable governance problem. You define the authority boundaries, encode them in the graph, enforce them at runtime.

But enterprise deployments are moving toward orchestration patterns where multiple specialized agents collaborate on complex workflows, a planning agent that delegates to execution agents, each taking micro-decisions that compose into consequential actions. In this architecture, the governance question becomes: who is accountable for the composed outcome?

If each agent has its own authority definition encoded in its own prompt or its own context pipeline, the organization ends up with a fragmented governance model that cannot reason about the aggregate behavior of the system. An action that is within bounds for each individual agent might constitute a policy violation when taken together.

This is why we believe the Decision Context Graph must be a shared infrastructure layer, not a per-agent configuration. The same authority structure, the same version of the rules, the same audit trail, available to every agent in the deployment. One decision layer for the organization, not a decision layer per agent.

This is the architectural conclusion that follows from taking the governance problem seriously at enterprise scale: the decision layer is organizational infrastructure, not agent configuration.

What this means for enterprise teams evaluating agent deployment today

The practical implications are direct.

If you are building a context layer, follow the a16z framework. It is well-designed and addresses a real problem. Invest in the human refinement step; it is the hardest and most important.

If you are deploying agents that do anything beyond reading and answering, meaning agents that approve, trigger, modify, commit, or execute, then a context layer is necessary but not sufficient. You need a decision layer. The decision layer is not a replacement for the context layer. It is the layer that makes the context layer operationally safe to act on.

And if you are evaluating whether to build or buy: ask the three questions from earlier in this piece. If your team cannot reproduce a past agent decision, cannot precisely locate where your agent's authority boundaries are encoded and governed, and cannot identify who is accountable when an agent acts incorrectly, you have a governance gap that will produce an incident before it produces a solution.

The a16z thesis is correct. Context is missing from most enterprise agent deployments, and it needs to be built, structured, maintained, and exposed as infrastructure. The only addition I would make is this:

Context without enforcement is not infrastructure. It is visibility. And visibility, without the mechanism to act on it before something goes wrong, is not governance.

Context layers are the prerequisite. Decision layers are the guarantee.

Further reading on this blog

For those who want to go deeper on the technical architecture: